preprint · part 5 · 6 of 7

Part 5: Axioms, Lemmas, and Main Theorem

Proof Boundary

I am not proving the whole metaphysical carnival I constructed. I am proving the internal mathematics of the system and the conditions under which explicit predictive state beats thinner baselines, can be compressed, can be decomposed into slow and fast pieces, and can induce a well-defined proposition ranking. The rest would be impossible from the formalism alone, and I do not get extra points for pretending otherwise.

The boundary keeps the proof mostly honest and keeps my pseudo-Kantian philosophical leanings from being smuggled into the math. I will systematically build my case now off of everything we discussed. Also, I do not have formal training in proofs, I mostly used the last 2 generations of AI models for this formalization, most of the work I did here was structural and ensuring the notation didn’t drift and that the results made enough sense to publish. I cannot fully trust this proof so if you see anything off please email me Bjorn@psi.dev.

Because Parts 3 and 4 now fix the sign of the training objective and the meaning of the slow refresh, I am going to keep those conventions explicit here too instead of quietly proving against a stale version of the machinery.

5.1 Axioms

I begin with axioms because these are the primitive commitments the rest of the machine will run on. If I do not pin these down explicitly, every later lemma quietly changes its meaning depending on how charitable the reader feels like being.

Axiom 1 (Ambient space). Let \(\mathcal N\) be a real separable Hilbert space, and let \(\mathcal M^{\mathrm{spec}} \subset \mathcal N\) be the finite-dimensional accessible subspace preserved by the lineage. Let

be the orthogonal projection onto that accessible subspace.

Axiom 2 (Individual instantiation). For each observer \(i\), there exists an inherited template \(G_i\), a realized embedding

and a phenomenal state \(\phi_{i,t}\) at time \(t\).

Axiom 3 (Task-conditioned predictive state). For each task \(\tau\) and horizon \(\Delta\), there exists a predictive observer-state

and an admissible proposition set \(\mathcal X_{i,t}^{\mathrm{adm}}\) such that, for every \(x_t \in \mathcal X_{i,t}^{\mathrm{adm}}\),

Axiom 4 (Operational slow/fast approximation). For a task \(\tau\) and horizon \(\Delta\), define the measurable operational state by

with

and, in online form,

Here \(g_i^{\mathrm{slow}}\) and \(g_{i,t}^{\mathrm{fast},\tau}\) are source-aware and regime-aware categorical trace pools built under a fixed slot schema with null and mask behavior for missing cells. Apparent contradictions are first contextually lifted into richer typed traces before later maps decide whether they are comparable, axis-conditioned, or genuinely unresolved.

The slow term moves on a longer timescale. If \(\hat T_i^{\mathrm{new}}\) is the refreshed durable estimate obtained from newly accumulated slow evidence, then the operational refresh is

Equivalently, if

then

Assume this state is an operational approximation to \(q_{i,t}^{(\tau,\Delta)}\).

Axiom 5 (Transition factorization). For each task \(\tau\), the transition law factors through the task-relevant encoding of the proposition. That is, there exists a measurable transition map \(G_\theta\) such that

Axiom 6 (Axis-retention rule). At evolutionary stage \(\tau\), a candidate axis is retained only when its net fitness contribution is positive. If \(\Delta \Phi_{\tau} > 0\), the axis is added to the species-level access structure; otherwise it is not retained.

Axiom 7 (Auxiliary readout factorization). For each auxiliary probe \(A_{i,t+\Delta}^{(m,\tau)}\) included in the task, its conditional law factors through the predictive state. Equivalently, there exists a measurable readout \(R_m\) such that

Whenever the probe index \(m\) is suppressed below, \(A_{i,t+\Delta}^{(\tau)}\) or \(\hat a_{i,t+\Delta}^{(\tau)}\) denotes the full auxiliary bundle \((A_{i,t+\Delta}^{(1,\tau)}, \dots, A_{i,t+\Delta}^{(M,\tau)})\) or its prediction.

Whenever the benchmark training loop appears below, the optimize-by-descent objective is

- \sum_{m=1}^{M}\lambda_m \mathcal L_{\mathrm{probe},m}

- \lambda_{\mathrm{reg}}\Omega(\theta), \qquad \lambda_m,\lambda_{\mathrm{reg}} \ge 0, $$

with gradient descent update

This is not a fresh metaphysical axiom. It is just the sign-consistent operational convention inherited from Parts 3 and 4, and I am writing it here once so the proof section does not silently revert to the wrong loop.

These are all of the axioms. From here onward, the proofs are internal to the system.

5.2 Definitions: sufficiency and minimality

I need these definitions explicitly because otherwise “state” can mean anything from the whole person to a CRM row.

Definition 1 (Task-sufficient predictive state). A state \(q_{i,t}^{(\tau,\Delta)}\) is sufficient for task \(\tau\) and horizon \(\Delta\) if, for every admissible proposition \(x_t \in \mathcal X_{i,t}^{\mathrm{adm}}\),

Definition 2 (Minimal predictive state). A sufficient state \(q_{i,t}^{(\tau,\Delta)}\) is minimal if, for every other sufficient state \(r_{i,t}^{(\tau,\Delta)}\), there exists a measurable map \(h\) such that

almost surely.

This is the cleanest way to state identifiability here. Not “the one true coordinates of the mind,” but “the smallest sufficient state is unique up to admissible reparameterization.”

5.3 Lemma 1: Best accessible approximation

I prove this first because projection has to stop being metaphor here. If the species-level slice is going to do any real work, it has to be the best accessible approximation in a strict sense, not just the slice I like philosophically.

Lemma 1. For every noumenal state \(\mathbf n_t \in \mathcal N\),

That is, the lineage-accessible projection is the unique closest point to the full noumenal state among all points in the accessible subspace.

Proof. Because \(\mathcal M^{\mathrm{spec}}\) is finite-dimensional, it is a closed subspace of the Hilbert space \(\mathcal N\). Every closed subspace of a Hilbert space admits a unique orthogonal projection. By the Hilbert projection theorem, that projection is exactly the unique element of the subspace minimizing the distance to \(\mathbf n_t\). Therefore \(P^{\mathrm{spec}} \mathbf n_t\) is the best accessible approximation to the noumenal state. QED.

5.4 Lemma 2: Novelty test and monotonicity of retained axes

This is where I force the evolutionary step to become exact. A candidate axis only counts as new if it survives subtraction by what is already captured, and it only stays if it actually improves the objective rather than just sounding biologically plausible.

Lemma 2. Let \(\mathcal M_{\tau}^{\mathrm{spec}}\) be the current species-level accessible subspace, and let \(\Delta \mathbf v \in \mathcal N\) be a candidate mutation. Define the orthogonal residual

Then:

- if \(\Delta \mathbf v \in \mathcal M_{\tau}^{\mathrm{spec}}\), we have \(\Delta \mathbf v_{\perp} = 0\), so the mutation adds no genuinely new axis;

- if \(\Delta \mathbf v_{\perp} \neq 0\) and \(\Delta \Phi_{\tau} > 0\), then retaining the normalized residual strictly increases the evolutionary objective

Proof. The first claim is immediate: if \(\Delta \mathbf v\) already lies in the current subspace, its orthogonal projection onto that subspace is itself, hence the residual is zero. For the second claim, if \(\Delta \mathbf v_{\perp} \neq 0\), normalize it and consider the updated space

By definition of the retention rule, \(\Delta \Phi_{\tau} > 0\) exactly when the expected reproductive gain of adding the axis exceeds its maintenance cost. Hence

So accepted axes are precisely those that increase the evolutionary objective. QED.

5.5 Lemma 3: Task-equivalence and composability

This is needed because “composable” means nothing unless I can say exactly when two different things are the same for the task. This is the place where physical difference gets subordinated to observer-relevant difference.

Lemma 3. Suppose two propositions \(x_t^{(1)}\) and \(x_t^{(2)}\) have identical task-relevant projected encodings:

Then, for fixed \(\hat T_i\), \(z_{i,t}\), \(c_{i,t}\), and \(w_t\), the next predicted predictive state is identical:

Proof. By Axiom 5, the transition map depends on the proposition only through its task-relevant encoding. If two propositions have the same projected encoding, then every argument of \(G_\theta\) is the same in the two evaluations. Therefore the outputs must be equal. That is the precise sense in which realities become composable here: different physical propositions may be equivalent for a task if they project to the same observer-relevant coordinates. QED.

5.6 Lemma 4: Recursive rollout

I prove rollout separately because a one-step update is not yet a world model in the strong sense I want. The minute the system can be iterated without ambiguity, it stops being a static fit and starts becoming something that can actually simulate a trajectory.

Lemma 4. Fix a task \(\tau\). Let the one-step world model be

Then for any finite horizon \(k \geq 1\), the multi-step rollout

is uniquely defined.

Proof. The case \(k=1\) is given by definition. Assume the rollout exists and is unique for some \(k\). Then the \((k+1)\)-step rollout is obtained by applying the same deterministic map \(\mathcal W_{\tau}\) to the unique state produced at step \(k\) together with the next proposition \(x_{t+k}\). Hence the \((k+1)\)-step rollout also exists and is unique. By induction, the result holds for every finite horizon. QED.

5.7 Theorem 1: The value of explicit history

This is the benchmark theorem in plain language: if the past carries usable signal, then models that throw the past away are leaving information on the table by construction. I want that point stated mathematically so “state matters” stops sounding like an intuition and starts sounding like an inequality.

Theorem 1. Let the observable task outcome be

Define the static feature bundle

and the richer history-aware bundle

Then:

- under log loss, the Bayes-optimal history-aware predictor cannot do worse than the Bayes-optimal static predictor;

- for binary outcomes under Brier score, the same inequality holds;

- the inequality is strict whenever \(Y\) is not conditionally independent of history given the static bundle.

Proof. Under log loss, the Bayes-optimal risk is the conditional entropy:

Because conditioning on more information cannot increase conditional entropy,

So the Bayes-optimal predictor using history cannot do worse than the Bayes-optimal predictor without it.

For binary outcomes under Brier score, the Bayes-optimal prediction is \(\mathbb E[Y \mid X]\), and the corresponding optimal risk is

Conditioning on a larger sigma-algebra cannot increase conditional variance in expectation, hence

The inequality is strict whenever history carries signal about \(Y\) not already contained in \(X_0\), equivalently whenever \(Y \not\mathrel{\perp\mspace{-10mu}\perp} H_{i,\le t} \mid X_0\). Therefore explicit history is guaranteed to help in principle whenever it contains conditionally relevant information. QED.

5.8 Theorem 2: Minimal-state uniqueness up to reparameterization

I put this here because sufficiency alone is cheap. A gigantic archive is sufficient too. The more interesting claim is that if a minimal sufficient state exists, then two such states are the same object up to relabeling.

Theorem 2. Suppose \(q_{i,t}^{(\tau,\Delta)}\) and \(q_{i,t}^{\prime(\tau,\Delta)}\) are both minimal sufficient predictive states for the same task \(\tau\) and horizon \(\Delta\). Then there exist full-measure subsets \(S\) and \(S’\) of their supports and a measurable bijection \(b:S \to S’\) such that

almost surely.

Proof. Because \(q_{i,t}^{\prime(\tau,\Delta)}\) is sufficient and \(q_{i,t}^{(\tau,\Delta)}\) is minimal, there exists a measurable map \(h\) such that

almost surely.

Likewise, because \(q_{i,t}^{(\tau,\Delta)}\) is sufficient and \(q_{i,t}^{\prime(\tau,\Delta)}\) is minimal, there exists a measurable map \(h’\) such that

almost surely.

Composing these gives

and

Therefore there are full-measure subsets \(S\) and \(S’\) of the respective supports on which the compositions are literally the identity. On those subsets, \(h’\) and \(h\) are inverse measurable maps. Hence \(b := h’|_S\) is a measurable bijection from \(S\) to \(S’\), and the two minimal sufficient states differ only by reparameterization up to null sets. QED.

5.9 Theorem 3: Sufficient latent-state compression

I put this after the minimality theorem because the point is not merely to worship bigger models. It would probably be impossible to compute an individual’s state transitions based on a literal one-to-one representation of the current state. The whole game is to find the smaller state that keeps the predictive content of the larger history without dragging the whole archive behind it forever.

Theorem 3. Suppose the fast latent state \(z_{i,t}\) is sufficient for task \(\tau\) in the sense that

where \(Y = Y_{i,t+\Delta}^{(\tau)}\). Then, conditional on \((\hat T_i, c_{i,t}, w_t, x_t)\), the full interaction history can be replaced for predictive purposes by the compressed state \(z_{i,t}\):

Proof. This is immediate from the stated conditional independence. If, conditional on \((z_{i,t}, \hat T_i, c_{i,t}, w_t, x_t)\), the outcome \(Y\) no longer depends on the full history, then the conditional distributions on the two sides are equal. Hence the latent state is a sufficient compression of history for the task once the slow/context bundle is held fixed. QED.

5.10 Theorem 4: History mediation by the fast state

This is the theorem that makes the slow/fast decomposition more than architecture taste.

Theorem 4. Assume the predictive state factors as

where \(\sigma_i\) is the slow profile term corresponding to the durable embedding \(\hat T_i\), and \(\zeta_{i,t}\) is the fast state corresponding to \(z_{i,t}\), updated from within-window event history. If

then every predictive contribution of within-window history beyond the static profile \(\sigma_i\) is mediated by the fast state \(\zeta_{i,t}\).

Proof. Conditioning on \((\sigma_i, c_{i,t}, w_t, x_t)\), the stated conditional independence implies that any residual dependence of \(Y\) on the full within-window history \(H_{i,\le t}\) must pass through \(\zeta_{i,t}\). Therefore, once the static profile and context are fixed, the predictive contribution of recent history is fully mediated by the fast state. QED.

5.11 Lemma 5: Probe-readout consistency

The probe heads have to connect to the state mathematically, not just aesthetically.

Lemma 5. Suppose an auxiliary probe \(A_{i,t+\Delta}^{(m,\tau)}\) satisfies Axiom 7. If two histories \(H^{(1)}\) and \(H^{(2)}\) yield the same predictive state

then, under the same admissible proposition \(x_t\), they induce the same conditional law for the probe:

Proof. By Axiom 7, the probe depends on the past only through the predictive state and the proposition. If the two histories map to the same predictive state and the same proposition is applied, then the conditional laws coincide immediately. QED.

5.12 Main theorem

Everything above is staged so it can collapse into one statement here without cheating too much. By the time I get to the main theorem, I want the geometry, the evolutionary filter, the transition equivalence, the rollout machinery, the benchmark logic, the minimality claim, the slow/fast mediation claim, and the probe logic all locked into the same object.

Main Theorem (Task-conditioned predictive observer-state world model). Assume Axioms 1 through 7. Then for every task \(\tau\) and prediction horizon \(\Delta\), there exists a finite-dimensional task-conditioned representation

with the following properties:

- Best accessible approximation. The species-level projection is the unique closest accessible representation of the noumenal state.

- Evolutionary coherence. Newly retained axes are precisely those that increase the evolutionary objective defined above.

- Task-equivalence. Propositions with identical task-relevant projected encodings induce identical next-state predictions.

- Recursive simulability. Finite-horizon rollouts of the world model are uniquely defined.

- State advantage. If history contains signal not reducible to static features, then the Bayes-optimal history-aware predictor strictly improves on the Bayes-optimal static predictor under log loss, and under Brier score for binary outcomes.

- Minimal-state uniqueness. Any two minimal sufficient predictive states for the same task and horizon are equal up to measurable bijection on full-measure subsets of their supports.

- Sufficient compression. If the fast latent state is sufficient for the task, then, conditional on the slow/context bundle already carried by the operational state, the full history can be replaced by that latent state without predictive loss.

- Slow/fast mediation. If the predictive state factors into slow and fast parts and the stated conditional independence holds, then the predictive contribution of within-window history beyond static profile is mediated by the fast state.

- Probe consistency. Auxiliary probes that factor through the predictive state induce equal conditional laws whenever the predictive state is equal.

Proof. Property 1 is Lemma 1. Property 2 is Lemma 2. Property 3 is Lemma 3. Property 4 is Lemma 4. Property 5 is Theorem 1. Property 6 is Theorem 2. Property 7 is Theorem 3. Property 8 is Theorem 4. Property 9 is Lemma 5. Together these results establish that the framework admits a mathematically well-defined predictive observer-state world model, that this model can be rolled forward in time, that its explicit state variables matter exactly when the data-generating process contains information that simpler baselines discard, that minimal sufficient state is unique up to reparameterization, and that the slow/fast decomposition has a precise mediation interpretation rather than being mere architecture cosplay. Finally, QED.

5.13 Corollary: Observational proposition ranking

I wanted the proposition-optimization point stated plainly in the formal section, but let’s keep it bounded.

Corollary. Let \(U_\tau\) be any measurable task utility and let \(\mathcal X_{i,t}^{\mathrm{adm}}\) be any admissible candidate proposition set. Then the predictive observer-state world model induces a well-defined observational ranking

Hence the argmax

is well-defined whenever the candidate set is finite, or compact and the map \(x \mapsto \operatorname{score}_\theta(x \mid s_{i,t}^{(\tau,\Delta)})\) is continuous.

Proof. By Axiom 5 and the readout maps, the predicted next state and its observable consequences are measurable functions of the operational state and the proposition. Therefore any measurable utility of those quantities has a well-defined conditional expectation, which induces an ordering over admissible propositions. Existence of an argmax is immediate in the finite case. In the compact case, continuity of the score and the extreme-value theorem give an attained maximum. QED.

This corollary gives me a ranking or search problem. It does not prove that following the ranking causally improves the world. That still requires propensities, randomization, or online experimentation.

5.14 What is proved, and what is not

I end here because this is where the proof should stop, not because this is completely done. The point is to leave you with a result that is strong where it is proved and sharply bounded where it is not, instead of letting the formal section bloat until it starts hallucinating.

This is the strongest result available here.

What is proved:

- the accessible-space formalism is mathematically fine;

- the axis-retention rule has a well-defined novelty test;

- observer-relevant proposition equivalence induces equal next-state prediction;

- the world model can be recursively simulated for finite horizons once the relevant proposition path and exogenous inputs are specified;

- explicit history and explicit state have a principled predictive advantage whenever the future is not conditionally independent of them;

- a minimal sufficient predictive state, if it exists, is unique up to reparameterization on full-measure subsets;

- a fast latent state can stand in for the full history, conditional on the slow/context bundle, in some cases;

- the slow/fast decomposition has a clean mediation interpretation when the stated independence assumptions hold;

- auxiliary probe readouts can be tied to the predictive state rather than bolted on as decoration;

- the model induces a well-defined observational ranking over admissible propositions, with argmax existence under the stated finiteness or compactness-plus-continuity conditions.

What is not proved:

- that the noumenal arena literally exists;

- that qualitative experience is exhausted by the chosen coordinates;

- that the universe is deterministic;

- that retrospective ranking over candidate propositions is already causal policy improvement;

- that \(\hat T_i\) or \(z_{i,t}\) recovers the one true hidden coordinates of a person.

Those remain axioms, interpretations, or empirical research claims. They are not derivable from the mathematics alone.

That is the stopping point. Start with the claim that reality, as it appears to an organism, is the output of an evolved interpretive structure. End with a formal result: once that structure is written as an embedding-plus-predictive-state system, the resulting world model can be defined, proved internally coherent, compressed, minimally identified up to reparameterization on full-measure subsets, rolled forward, probed, ranked over propositions, and tested against baselines.

In one line:

That is the full arc from noumenal excess to observer, from observer to state, and from state to prediction.

Done.

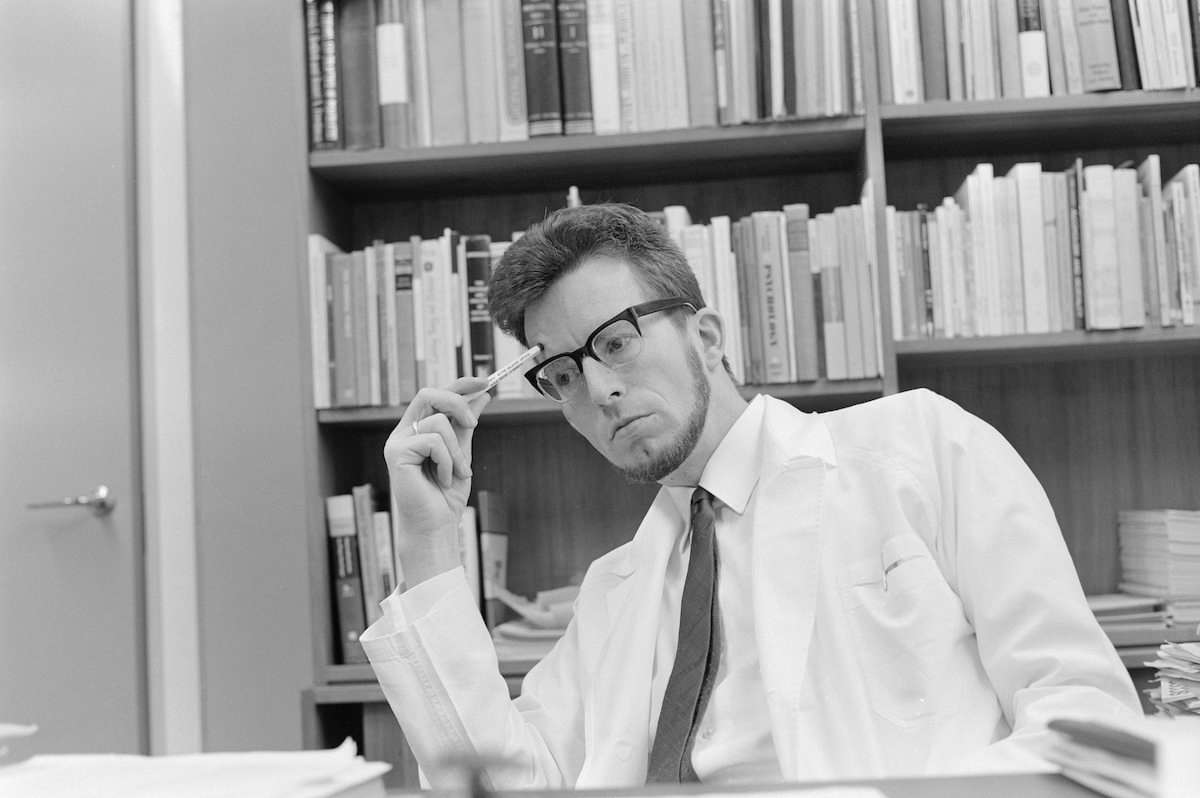

In Memory of Einar Kringlen.

It has been an honor tackling this multi-generational problem with you. To you I owe much.